We are pleased to announce that we recently created a new

release of the CSO Classifier (v2.1), an application for automatically

classifying research papers according to the Computer Science Ontology (CSO).

Recently, we have been intensively working on improving its

scalability, removing all its bottlenecks and making sure it could be run on

large corpus. Specifically, in this version we created a cache version of the word2vec

model that allows us to directly connect the words within the model vocabulary with

the CSO topics. In this way, the classifier is able to quickly retrieve all CSO

topics that could be inferred by given tokens, speeding up the processing time.

In addition, since the cache weighs only ~64M, compared to the actual word2vec

model ~366MB, it allows to save additional time at loading time.

Thanks to this improvement the CSO Classifier is around 24x

faster and can be easily run on large corpus of scholarly data. At the moment,

the classifier is able to annotate 1 paper in around 1 second, which we

consider a great achievement since the very first prototype was requiring

around 20 seconds per paper.

About CSO Classifier

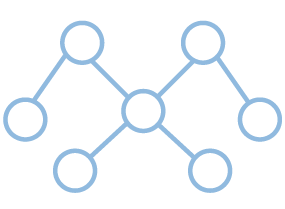

As showed in our resource

page, the CSO Classifier consists of two main components: (i) the syntactic

module and (ii) the semantic module. The syntactic module parses the input

documents and identifies CSO concepts that are explicitly referred in the

document. The semantic module uses part-of-speech tagging to identify promising

terms and then exploits word embeddings to infer semantically related topics.

The CSO Classifier then combines the results of these two modules and enhances

them by including relevant super-areas. More info about the CSO Classifier are

available in [1].

Download

The classifier is available through a Github repository: https://github.com/angelosalatino/cso-classifier,

but you can download the latest release (version v2.1) from:

Functionalities

The function running the classifier allows you to set two

parameters: (i) modules and (ii) enhancement. With the first parameter you to

decide which of the two modules to run. If you are looking for more precise

results and lower computational time (0.5 sec for paper) you can run only the

“syntactic” module. Instead, if you are looking for more comprehensive results

(with a slight impact on the precision) you can run “both” modules. With the

second parameters you control whether the classifier will try to infer, given a

topic (e.g., Linked Data), only the direct super-topics (e.g., Semantic Web) or

all its super-topics (e.g., Semantic Web, WWW, Computer Science). Using

“first” as value, it will infer only the direct super topics.

Instead, if using “all”, the classifier will infer all its

super-topics. Using “no” the classifier will not perform any

enhancement.

Other info, including how to run the classifier on a single

paper or in batch mode are available within the README file within the

repository/release.

References

[1] Salatino, A.A., Osborne, F., Thanapalasingam, T. and

Motta, E. 2018. The CSO Classifier: Ontology-Driven Detection of Research

Topics in Scholarly Articles. Available

in Pre-Print here

Scholarly Knowledge Mining

Scholarly Knowledge Mining

Digital Humanities

Digital Humanities

Data Science

Data Science

Smart Cities and Robotics

Smart Cities and Robotics